Redesigning a website, changing a page URL or restructuring content shouldn’t mean losing traffic or rankings. Whatever the reason, one thing remains constant: if you don’t redirect a URL correctly all your hand work disappears into dust.

Redirects act like digital forwarding addresses. It manages your website structure and helps users plus search engine navigate from an old link to a new one without confusion, dead ends or “page no longer exists” errors.

A proper redirect tells your site:

“Hello, this page moved – send visitors here instead”

Understanding redirects is essential for SEO, user experience and long term site stability.

Whether you’re updating a single permalink or restructuring an entire website implementing the correct type of redirect isn’t optional.

This guide outlines the correct types, up to date methods, when to use them, the mistakes to avoid and best practices for redirecting URLs.

Ensuring you’ll be able to redirect a URL confidently and correctly without harming your rankings.

What are redirects?

Redirects are response instructions which tell browsers and search engines to forward traffic from one URL to a different one.

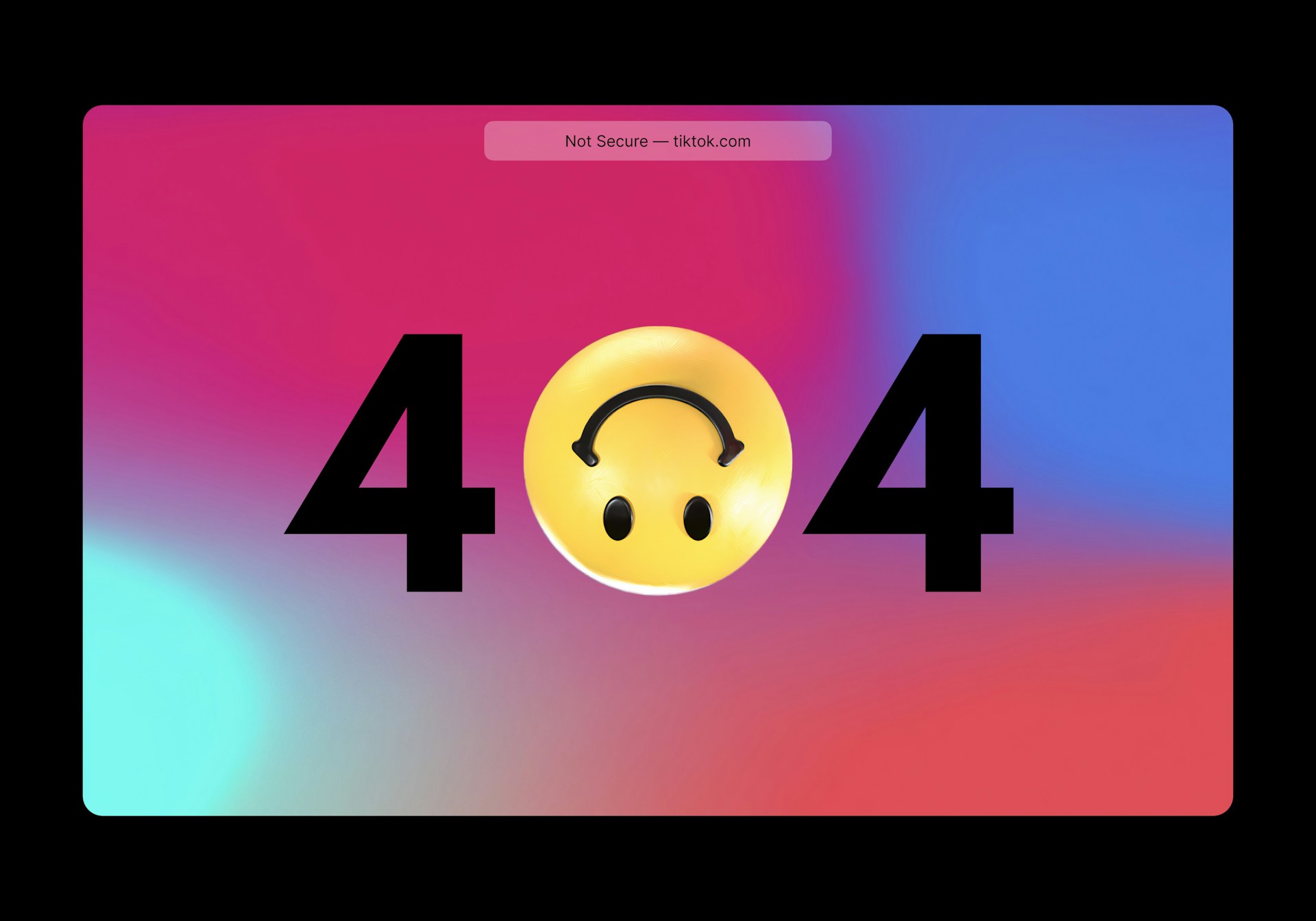

It ensures that when your old page is moved, updated or deleted, users and search engines don’t face the “404 page”.

Photo by Erik Mclean on Unsplash

RELATED POSTS

- HOW TO MIGRATE FROM WIX TO WORDPRESS IN 8 EASY STEPS – SARMLife – Best SEO Company | Jacksonville, Florida

- INTERNAL LINKING FOR SEO – 9 BEST PRACTICES AND TIPS – SARMLife

- 3 Key Differences Between Follow and Nofollow Links in SEO – SARMLife

- Link Building for SEO: The Ultimate Guide + Email Template – SARMLife

Importance of redirection for SEO

Redirects are more than fixing broken links. They help preserve your site structure, rankings and authority in a bid to improve SEO.

So here’s why you need to learn how to redirect a URL:

1. Preserves link juice

Redirects ensure the new URL continues to benefit from links and authority the previous page earned.

2. Preserves search rankings

Removing or changing URLs can harm your ranking position but redirection helps to preserve your search rankings.

3. Improves user experience

Visitors don’t get to experience errors or the annoying “page no longer exists” as they land where they intended and avoid irrelevant content.

4. Helps search engines understand your site’s architecture

Search engines depend on proper URL redirection during site restructuring or migration.

5. Prevents duplicate content issues

During HTTPS migrations, domain restructuring or content consolidation is especially helpful. Google prefers the HTTPS version of your webpage also.

Types of redirects

1. Server- Side Redirects (Recommended for SEO)

These redirects are processed by the server before the page loads and send an HTTP status code to browsers and search engines.

The common HTTPs status codes are:

- 301 – Moved Permanently

- 302 – Found (Temporary)

- 303 – See Other

- 304 – Not Modified

- 307 – Temporary Redirect

- 308 – Permanent Redirect

These are the only redirects that reliably pass SEO value.

2. Client side redirects

Client-side redirects are executed by the browser after the page loads, not on the server. Because of that, they are less reliable for SEO and should only be used when the server-side is unavailable

Includes:

i. Meta-refresh redirect

A meta-refresh redirect is a small piece of HTML placed inside the <head> of a page that instructs the browser to automatically reload the page to a new URL after a defined number of seconds.

ii. Javascript redirect

A JavaScript redirect changes the browser’s current location using script execution.It is the most flexible redirect method but also the most expensive in terms of performance and reliability.

Photo by Ilya Pavlov on Unsplash

When to use a Redirect?

Not all moments require you to redirect a URL. In fact, over-using them can lead to slower page speeds and crawl budget issues with search engines.

However, a redirection becomes necessary when the original path to your content no longer exists or needs to be temporarily bypassed.

Here are some common situations that call for a redirection:

1. When changing domain name

You should use a redirect a URL when moving from /oldbrand.com to /newbrand.com to keep existing traffic.

By doing this, anyone using old bookmarks or incoming links will still reach the current content, and you don’t lose the SEO value linked to the old URLs.

It also helps search engines understand that the site has permanently moved.

2. When deleting a webpage

You should redirect a URL when you’ve deleted a page and you need to send visitors to a newer, similar page, a redirect ensures they don’t hit a “404 Not Found”.

3. When changing content management systems

When changing platforms, keep in mind that URLs on the new platform may differ from the old ones. Without redirects, old links, bookmarks, backlinks and search engine entries break.

Implementing redirects helps preserve usability and SEO value – so the change doesn’t tank your site traffic.

4. When moving to a different country code domain

You should redirect a URL if you switch from a global domain to a region-specific one (or vice-versa), redirects ensure that visitors and search engine bots using the old domain are smoothly taken to the new one.

This transition is vital to avoid broken links and support SEO continuity, signaling to search engines that your authority has simply moved to a new home.

5. When switching to HTTPS

When moving from an insecure HTTP version of your site to the secure HTTPS version, you should redirect all old HTTP URLs to the new HTTPS versions.

This ensures users automatically use a secure connection and helps search engines treat the HTTPS version as canonical.

This move not only improves security for your users but also preserves the SEO value you have worked hard to build.

Photo by Miguel Ángel Padriñán Alba on Unsplash

6. When changing URLs

Whether you are changing URL paths, renaming specific pages, or restructuring entire folders and categories, your old URLs must redirect to the new ones.

Without these pointers, both users and search engines will hit dead ends.

By using redirects here, you preserve all inbound traffic and SEO value tied to those old paths, ensuring you don’t lose the digital footprint you’ve already established.

Redirect Status Codes & Use Cases

| Goal | HTTP Code | Best Use Case | SEO/Browser Impact |

| Permanent Move | 301 or 308 | Domain changes, URL restructuring, switching to HTTPS, or platform migrations. | Transfers ranking power to the new URL; replaces the old link in search results. |

| Temporary Move | 302 or 307 | Maintenance windows, short-term marketing campaigns, or temporary downtime. | Tells search engines to keep the original URL in the index. |

| Post-Form Submission | 303 | Redirecting a user after a POST request (like a form) to a “Thank You” page. | Forces a fresh GET request to prevent “Double Submit” errors on refresh. |

| Cache Validation | 304 | When the browser asks if a resource has changed since the last visit. | Saves bandwidth; tells the browser to use its cached version. |

Methods to redirect a URL to another URL

1. Edge & CDN Redirects (Fastest and Most Scalable)

These redirects happen before your website server is even touched and hence, is one of the best ways to redirect a URL.

Platforms like Cloudflare execute redirects at the network edge, meaning the user is redirected from the closest global server, not your origin server.

Modern CDNs like Cloudflare support bulk and single redirect rules that operate at the network edge. These can return any 3xx status (301, 302, 307, 308) directly from the edge without touching your server.

Why this matters:

- Faster response time

- Lower server load

- Better performance at scale

Best for:

- Large website migrations

- Redirecting thousands of URLs

- Geo-based routing (e.g., country-specific pages)

Cons:

- This requires CDN access/configuration.

- Highly complex logic may require additional edge functions.

Example:

/old-home / 301/blog/* https://www.example.com/blog/:splat 308

What most people miss:

Edge redirects are not just faster, they reduce the number of steps between the user and the final page, which improves both Core Web Vitals and crawl efficiency.

Photo by Jonathan Kemper on Unsplash

2. Server-Level Redirects (Best for SEO and Control)

This is the gold standard when you want to redirect a URL and should be your default redirection method for SEO critical pages.

Server-level redirects happen directly on your hosting server (e.g., Apache, Nginx), meaning they are:

- Immediate

- Clean, and

- Fully understood by search engines.

Why this works best:

Search engines trust server responses more than anything else. There’s no delay, no interpretation, no guesswork.

Best for:

- URL restructuring

- Domain migrations

- HTTP → HTTPS redirects

- API endpoint redirects.

Cons

- Nginx requires server access

- Regex mistakes can break multiple pages for Apache

- Apache can be error-prone for complex rules

- IIS (web.config) requires Windows server access

Example Apache (.htaccess):

- Standard:

Redirect 301 /old-page https://example.com/new-page

- Permanent (308) preserving POST:

Redirect 308 /old-page https://example.com/new-page

- Temporary (307) preserving METHOD:

Redirect 307 /promo https://example.com/lightning-sale

Example (Nginx):

- Standard:

return 308 https://example.com$request_uri;

- Permanent (308) preserving POST:

server {

listen 80;

server_name old.example.com;

return 308 https://new.example.com$request_uri;

}

- Temporary (307) preserving METHOD:

location /maintenance {

return 307 https://example.com/maintenance;

}

Example IIS (web.config):

- 301 permanent redirect:

<system.webServer>

<rewrite>

<rules>

<rule name=”Redirect old”>

<match url=”^old-page$” />

<action type=”Redirect” url=”https://example.com/new-page” redirectType=”Permanent”/>

</rule>

</rules>

</rewrite>

</system.webServer>

3. Application-Level Redirects (When Logic is Required)

An application-level redirect is a redirection instruction triggered by the software’s source code (e.g., PHP, Node.js, Python) after a request has already reached the web application.

Unlike server-level redirects (like Nginx or Apache), which happen at the “front door,” application-level redirects allow for complex logic and decision-making before the user is sent to a new URL.

Because these redirects are part of your code, they are ideal for dynamic conditions. You use them to check specific criteria before deciding where a user should go:

- Is the user logged in? If not, redirect to /login

- Did they submit a form? Redirect to /thank-you using a 303 status

- Are they coming from a specific page?

Best for:

- Checkout flows

- Login systems

- Dynamic user behavior

Cons:

Slightly slower than server-level redirects because the application must process the request first.

Example (PHP):

header(“Location: https://example.com/new-page”, true, 301);

exit();

Example (Node.js):

res.redirect(303, ‘/thank-you’);

Photo by Krishna Pandey on Unsplash

4. CMS-Based Redirects (Simple but Limited)

CMS-based redirects are redirection rules managed directly through a Content Management System’s (CMS) administrative interface, such as WordPress, Shopify, or Drupal.

Unlike server-level redirects that require editing configuration files like .htaccess, these are typically handled via built-in tools or third-party plugins, making them accessible to non-technical users.

Best for:

- Non-technical users

- Managing small sets of redirects

Con:

- Not ideal for large-scale or enterprise migrations.

What to understand:

These often rely on application-level logic behind the scenes, which means:

- More flexibility

- Slight performance trade-offs

5. Client-Side Redirects (Last Resort Only)

Client-side redirects are instructions executed directly within the user’s web browser rather than on the web server after the page has already started loading.

When a browser loads a page containing these instructions, it is told to immediately navigate to a different URL.

There are two primary ways to trigger a redirect on the client side:

- JavaScript Redirect:

The most common method, using the window.location object.

<script>

window.location.href = “https://example.com/new-page”;

</script>

- Meta Refresh:

A tag in the <head> of an HTML document telling the browser to redirect after a given time.

<meta http-equiv=”refresh” content=”0;url=https://example.com/new-page”>

Cons:

- Slower (page loads first, then redirects)

- Less reliable for SEO

- Can confuse search engines

Best for when:

- You have no server access

- You need a temporary workaround

Best practices for HTML Meta Refresh:

- Set delay to 0 second so its instant

- Make sure the new URL is in the sitemap, update canonical links to the new URL.

Final Thoughts

Redirects are one of those invisible but powerful SEO components that protect rankings, site traffic and user experience.

They ensure nothing valuable isn’t lost when your site grows, changes structure or evolves.

When using any of the methods to redirect a URL, make sure to:

- Use server side redirects for pages that matter the most (site migrations, Domain changes or major Category re-structure).

- Use plugin/ hosting redirect tools for more routine redirects (old campaign URLs, small structural tweaks) if you’re on the side of ease.

- Avoid relying on JavaScript redirects in critical SEO paths unless it’s absolutely necessary.

- Meta Refresh or Javascript should be last resort; if you use it, keep delay = 0

Regardless of method, keep a redirect map (old URL ~ new URL), update internal links, update site map, monitor traffic and indexing status.

At SARMLife, we offer comprehensive site audit and website re-optimization services that cover identifying and resolving redirection issues.

We ensure that all your webpages are redirected appropriately without losing your SEO score for the affected pages.

Get our Website optimization Cleanup Plan and watch your website grow beautifully.

READ MORE: URL OPTIMIZATION | 11 BEST PRACTICES FOR AN SEO-FRIENDLY URL

![9 BEST BENEFITS OF STRUCTURED DATA FOR SEO [+ How to Use] 6 Structured data for SEO](https://sarmlife.com/wp-content/uploads/2026/03/Schema-markup-for-SEO.jpg)

![9 BEST BENEFITS OF STRUCTURED DATA FOR SEO [+ How to Use] 7 Local-rich-result-page](https://sarmlife.com/wp-content/uploads/2026/03/Local-rich-result-page.png)

![9 BEST BENEFITS OF STRUCTURED DATA FOR SEO [+ How to Use] 8 search-visibility](https://sarmlife.com/wp-content/uploads/2026/03/search-visibility.jpg)

![9 BEST BENEFITS OF STRUCTURED DATA FOR SEO [+ How to Use] 9 Voice-search-on-Google](https://sarmlife.com/wp-content/uploads/2026/03/Voice-search-on-Google.jpg)

![9 BEST BENEFITS OF STRUCTURED DATA FOR SEO [+ How to Use] 10 website-codes](https://sarmlife.com/wp-content/uploads/2026/03/website-codes.jpg)

![9 BEST BENEFITS OF STRUCTURED DATA FOR SEO [+ How to Use] 11 Rank-Math-Schema-Generator](https://sarmlife.com/wp-content/uploads/2026/03/RankMath-Schema-Generator.png)

![9 BEST BENEFITS OF STRUCTURED DATA FOR SEO [+ How to Use] 12 Backend-codes](https://sarmlife.com/wp-content/uploads/2026/03/Backend-codes.jpg)