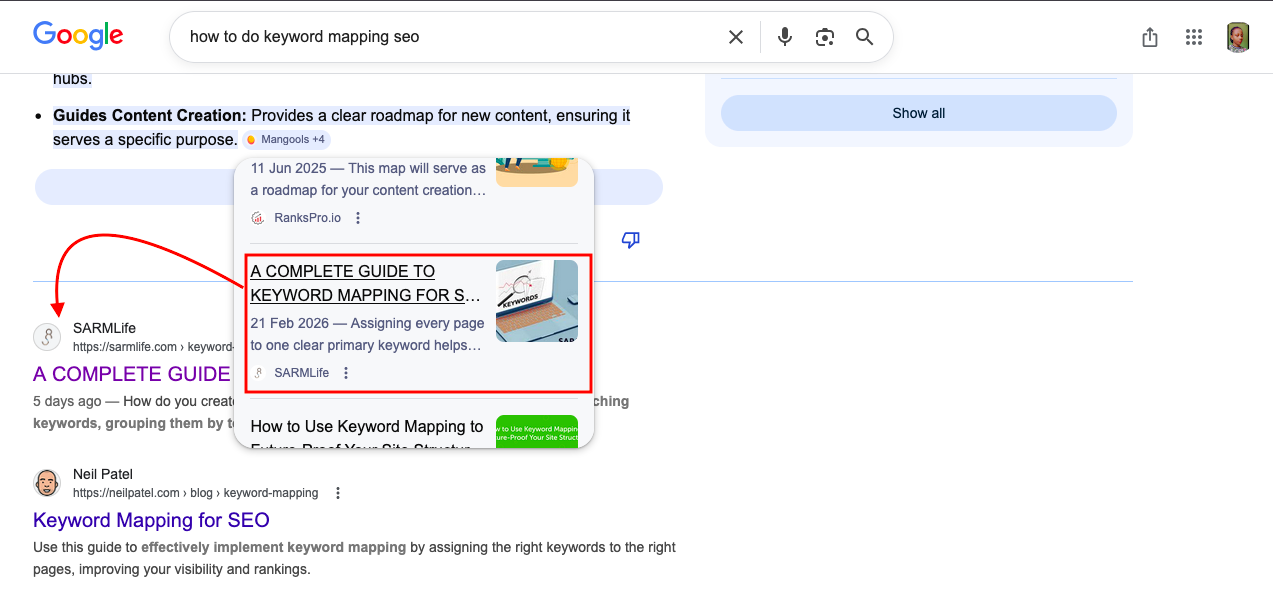

A few weeks ago, I published an optimized blog post that I was confident would perform well in search results. The content was solid, the on-page SEO was properly structured, and everything looked ready to rank. But after a few days, I searched for the post and realized something was wrong. I couldn’t find the page anywhere on the SERPs.

When I checked the indexing report in Google Search Console, I discovered that the URL was not on Google, which meant the page had not been crawled or indexed yet.

I submitted the URL manually for crawling and indexing, and within five days, the page started gaining visibility. It appeared on page one, was cited in AI Overviews, and even showed up in Google Images.

That experience reinforced something many website owners overlook. No matter how well a page is optimized, it cannot rank if search engines cannot properly access it. This is where the crawlability of a website becomes critical.

The crawlability of a website determines how easily search engines can discover, access, and understand the pages on your site.

Search engines like Google rely on automated bots called crawlers to scan websites and index their content. If those crawlers struggle to navigate your pages, important content may never appear in search results.

When technical issues block crawlers or make navigation difficult, search engines may miss valuable pages. This can limit your visibility even if your content is high quality.

Improving the crawlability of a website ensures that search engines can properly explore your content, understand your site structure, and include your pages in relevant search results.

At SARMLife, improving crawlability is often one of the first steps in our technical SEO audits because even the best content strategies struggle to perform.

What Is the Crawlability of a Website?

The crawlability of a website refers to how easily search engine crawlers can discover and access the pages on a site.

Search engines like Google use automated bots to scan websites and follow links between pages. If those crawlers encounter blocked pages, broken links, slow loading speeds, or confusing site structures, they may struggle to explore the site properly.

When crawlability is strong, search engines can efficiently navigate the website, understand its content, and index important pages.

When crawlability is weak, some pages may never appear in search results even if the content is valuable.

RELATED BLOG POSTS

- HOW TO QUICKLY IMPROVE PAGE SPEED FOR YOUR WEBSITE – SARMLife

- 9 TOP WAYS TO BUILD QUALITY BACKLINKS FOR SEO IN 2024 – SARMLife

- Link Building for SEO: The Ultimate Guide + Email Template – SARMLife – Best SEO Company | Jacksonville, Florida

- 3 Key Differences Between Follow and Nofollow Links in SEO – SARMLife

Why Crawlability Matters for SEO

The crawlability of a website directly affects how well search engines can index and rank your pages.

If crawlers cannot access important content, that content cannot appear in search results.

Good crawlability helps search engines:

- Discover new pages faster

- Understand website structure

- Update search indexes efficiently

- Allocate crawl budget effectively

For this reason, improving crawlability is a core part of technical SEO optimization.

How the Crawlability of a Website Works

Before a page appears in search results, it usually goes through three key stages: crawling, indexing, and ranking.

The process begins when a search engine crawler discovers a page. This discovery often happens through:

- Internal links

- Backlinks from other websites

- Submitted XML sitemaps

Once a crawler lands on a page, it scans the content and follows the links it finds. In doing so, it gradually builds a map of your website’s structure.

However, crawlers also follow specific instructions provided by the website. Files like robots.txt, meta directives, and canonical tags help search engines understand which pages they should crawl and which ones they should ignore.

If the crawlability of a website is strong, search engines can easily navigate through the entire site and index important pages. But when technical barriers exist, crawlers may stop exploring early or fail to reach deeper pages.

This is why improving crawlability is a critical part of technical SEO services and website optimization strategies.

7 Factors That Can Affect the Crawlability of a Website

Several technical and structural issues can interfere with how search engines crawl your site.

Understanding these factors helps you identify and fix problems before they affect your search visibility.

Here are some common factors that can affect the crawlability of your website:

1. Broken Links (404 Errors)

Broken links act like dead ends for search engine crawlers.

When a crawler follows a link that leads to a 404 error page, the exploration path stops there. If many broken links exist across a website, crawlers may struggle to discover deeper pages.

Broken links are often caused by:

- deleted pages

- incorrect URL formatting

- outdated content

- website migrations

- typing errors in internal links

Over time, a large number of broken links can weaken the crawlability of a website and reduce overall SEO performance.

Regular site audits help identify and fix these errors before they accumulate.

2. Incorrect Robots.txt Rules

The robots.txt file acts as a guide for search engine crawlers. It tells them which sections of a website they are allowed to access and which ones they should avoid.

When configured properly, robots.txt helps search engines focus on important content. However, incorrect settings can accidentally block valuable pages from being crawled.

For example, a misconfigured rule could prevent search engines from accessing:

- blog posts

- product pages

- category pages

- service pages

When this happens, the crawlability of a website suffers because search engines cannot properly explore the site.

3. Poor Site Structure

Poor website structure can affect how easily crawlers navigate your pages.

If your website has too many layers or confusing navigation paths, crawlers may struggle to find important content.

Ideally, pages should be organized within a clear hierarchical structure, allowing both users and search engines to move logically from one section to another.

A well-structured website usually includes:

- logical navigation menus

- organized categories

- strong internal linking

- shallow click depth

Improving site architecture not only strengthens the crawlability of a website but also improves user experience and SEO performance.

This is why site structure optimization is a core part of SARMLife’s technical SEO and website design/optimization services.

4. Server Errors

Server errors occur when a website fails to properly respond to a crawler’s request.

These errors usually appear as 5xx status codes, which indicate that something went wrong on the server side.

Common causes include:

- server overload

- hosting configuration problems

- maintenance issues

- software conflicts

If server errors occur frequently, crawlers may temporarily stop visiting the website. This prevents pages from being crawled or updated in search indexes.

Stable hosting infrastructure and regular monitoring are essential for maintaining the crawlability of a website.

5. Redirect Chains and Redirect Loops

Redirects are useful when moving or updating pages, but they can cause problems when overused.

A redirect chain occurs when a URL redirects to another URL, which then redirects again to another page.

A redirect loop happens when pages redirect endlessly between each other.

Both issues confuse crawlers and waste crawl resources. Instead of reaching the final page quickly, crawlers must follow multiple steps, which reduces efficiency.

To protect the crawlability of a website, redirects should be minimized and always point directly to the final destination.

6. Slow Page Load Speed

Page speed is important not only for users but also for search engines.

Search engine crawlers have a limited amount of time and resources when scanning websites. This is often referred to as the crawl budget.

When pages load slowly, crawlers spend more time processing each page. As a result, fewer pages are crawled during each visit.

Improving site speed helps search engines crawl more content and improves the crawlability of a website overall.

Performance improvements may include:

- image optimization

- caching strategies

- faster hosting infrastructure

- code optimization

Photo by Myriam Jessier on Unsplash

7. Duplicate and Thin Content

Duplicate content occurs when the same or very similar information appears on multiple pages.

When this happens, search engines struggle to determine which version of the page should be indexed and ranked. This confusion can weaken link equity and reduce crawl efficiency.

Thin content presents a different challenge. Pages with very little useful information provide limited value, so search engines may crawl them less frequently.

Maintaining high-quality, unique content across your website improves both search visibility and the crawlability of a website.

How to Improve the Crawlability of a Website

Improving the crawlability of a website usually involves a combination of technical optimization and structural improvements.

Some effective strategies include:

- Submitting an optimized XML sitemap

- Strengthening internal linking

- Fixing broken links and redirect chains

- Improving site speed and performance

- Configuring robots.txt correctly

- Optimizing for mobile-first indexing

- Implementing structured data

- Performing regular technical SEO audits

Many of these improvements are typically handled during a technical SEO audit, which evaluates how search engines interact with your website.

Final Thoughts

The crawlability of a website plays a foundational role in SEO because search engines cannot rank pages they cannot properly access.

Issues like broken links, server errors, redirect loops, poor site structure, and slow page speed can all prevent crawlers from discovering important content.

By fixing technical errors, improving site architecture, and monitoring crawl performance regularly, you make it easier for search engines to explore your website and index the pages that matter most.

Over time, strengthening the crawlability of a website leads to better visibility, faster indexing, and stronger overall search performance.

If you want to identify crawlability issues on your site, the team at SARMLife can perform a technical SEO audit to uncover hidden problems and help ensure search engines can fully access and understand your website.

Frequently Asked Questions

-

What is the difference between crawlability and indexability?

The crawlability of a website refers to how easily search engines can access and explore its pages. Indexability refers to whether those pages are allowed to be stored in a search engine’s index.

A page must first be crawled before it can be indexed. -

How do I check the crawlability of a website?

You can evaluate the crawlability of a website using tools such as:

– Google Search Console

– Screaming Frog SEO Spider

– Ahrefs Site Audit

– Semrush Site Audit

These tools identify crawl errors, broken links, blocked pages, and technical issues that may prevent search engines from accessing your content. -

What blocks search engine crawlers from accessing a website?

Several technical issues can prevent crawlers from accessing pages, including:

– Incorrect robots.txt rules

– Broken internal links

– Redirect loops

– Server errors

– Slow page loading speed

– Poorly structured navigation

Fixing these issues helps improve the crawlability of a website and increases the likelihood of pages being indexed. -

Does page speed affect crawlability?

Yes. Slow pages can reduce the crawl budget search engines allocate to your website.

If pages take too long to load, crawlers may scan fewer pages during each visit, which can delay indexing.

READ MORE: INTERNAL LINKING FOR SEO – 9 BEST PRACTICES AND TIPS – SARMLife